Spatial Computing Lab

Welcome to the Spatial Computing Laboratory at IIIT Bangalore.

ABOUT US

We research the technologies in the field of Spatial Computing.

“The Spatial Computing Laboratory at IIIT Bangalore aims to study general and specific topics in spatial computing, spatial data science/data mining, spatial databases, geo-statistical techniques and time-series forecasting models. The common thread in our research is to apply Advancements in Geospatial Technologies, Data Science, Artificial Intelligence and Statistical Methods to research problems at the interface of Computer Science and Spatio-Temporal Data of the Earth Observing Systems.”

The Lab is driven by some of the dedicated and smart researchers contributing new spatial data science capabilities to computing ideas and technologies in interdisciplinary areas of Geographic Information System (GIS) and Remote Sensing, Geoinformatics, Applied Machine Learning, Time Series Data Analysis, Big Data Analytics, Geo- (Artificial & Location) Intelligence, Geo-Smart solutions in Digital Agriculture, Urban Informatics, and Geo-spatial Applications in Natural Resource Management, Climate Studies, etc. Our Lab Members have won several Best Paper Awards, received Recognition, and achieved competitive International Travel Grants.

NEWS/UPDATES

Feb 2024

Paper titled “Advancing Image Classification through Parameter-Efficient Fine-Tuning: A Study on LoRA with Plant Disease Detection Datasets” accepted at ICLR 2024.

Jan 2024

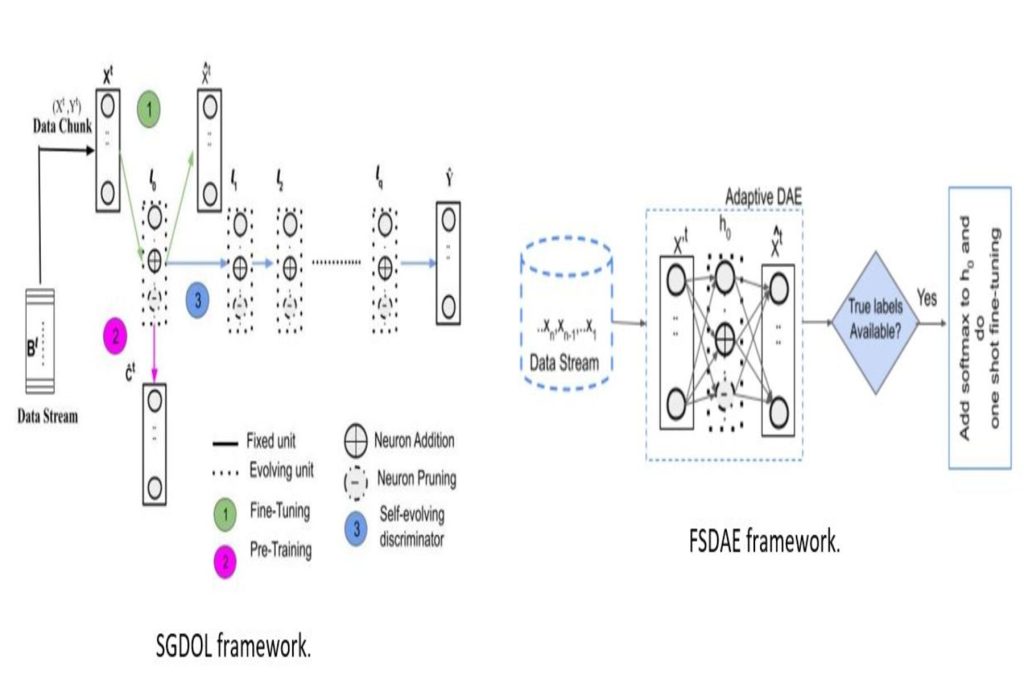

Congratulations. Yash Mittal successfully defended his Master of Science (MS) by Research Thesis on “Incremental Learning on Spatial Data and Applications”.

Dec 2023

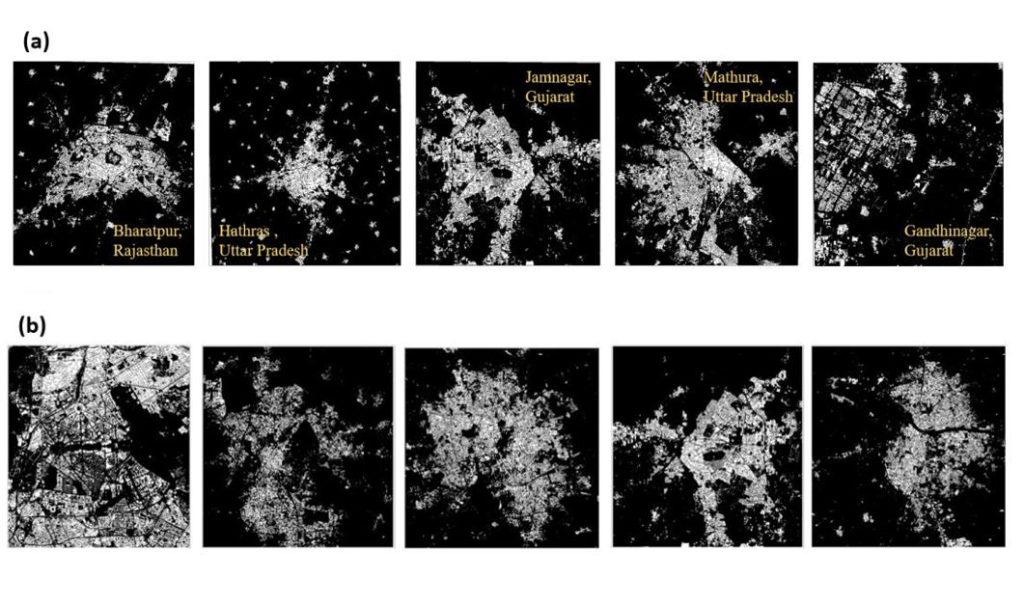

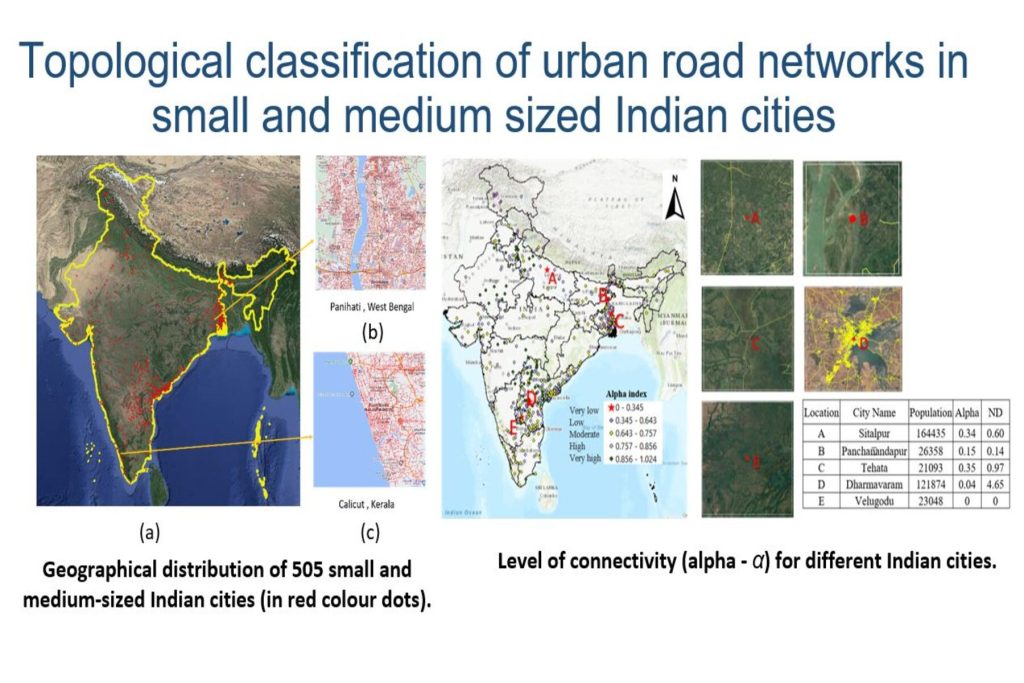

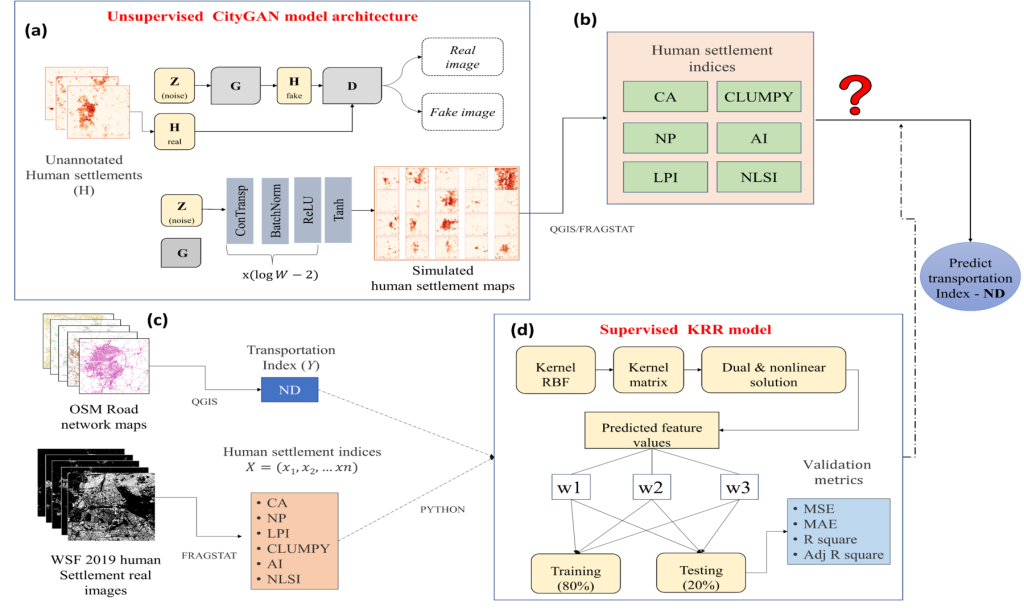

Paper titled “Prediction of Transportation Index for Urban Patterns in Small and Medium-sized Indian Cities using Hybrid RidgeGAN Model” accepted in Nature Scientific Reports.

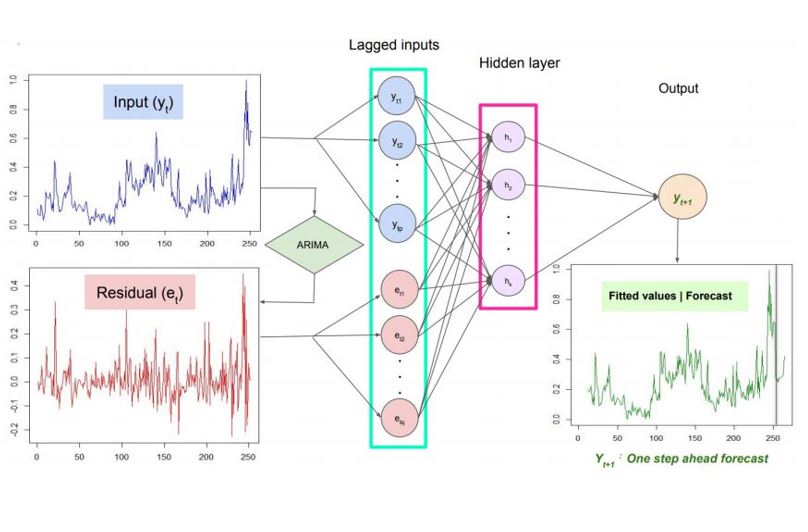

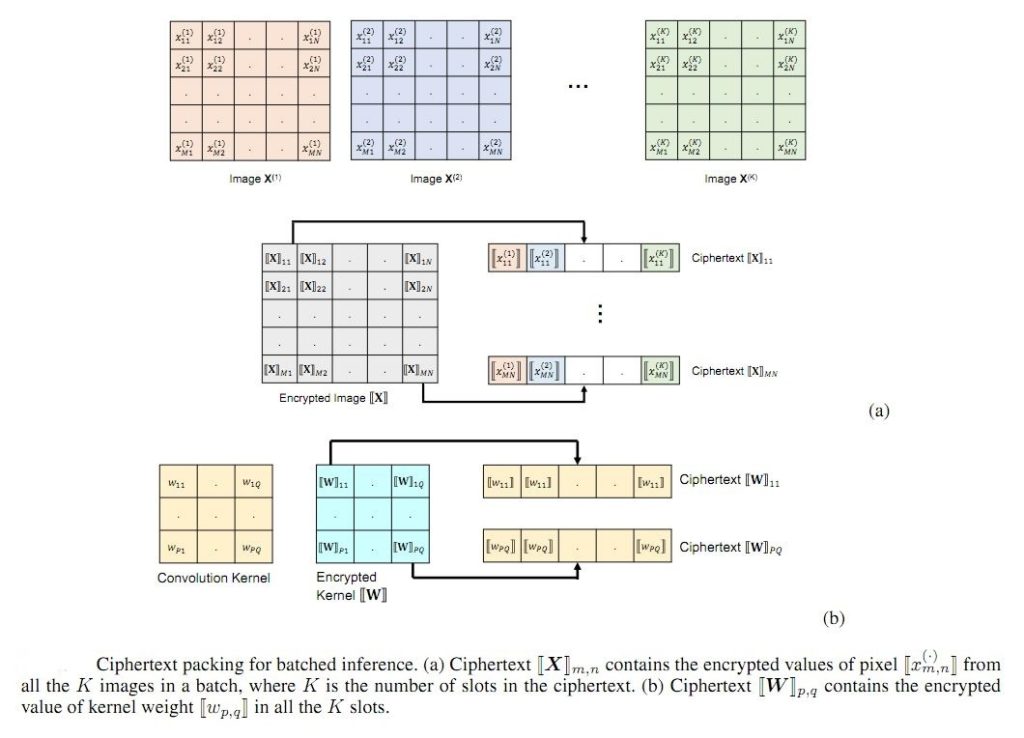

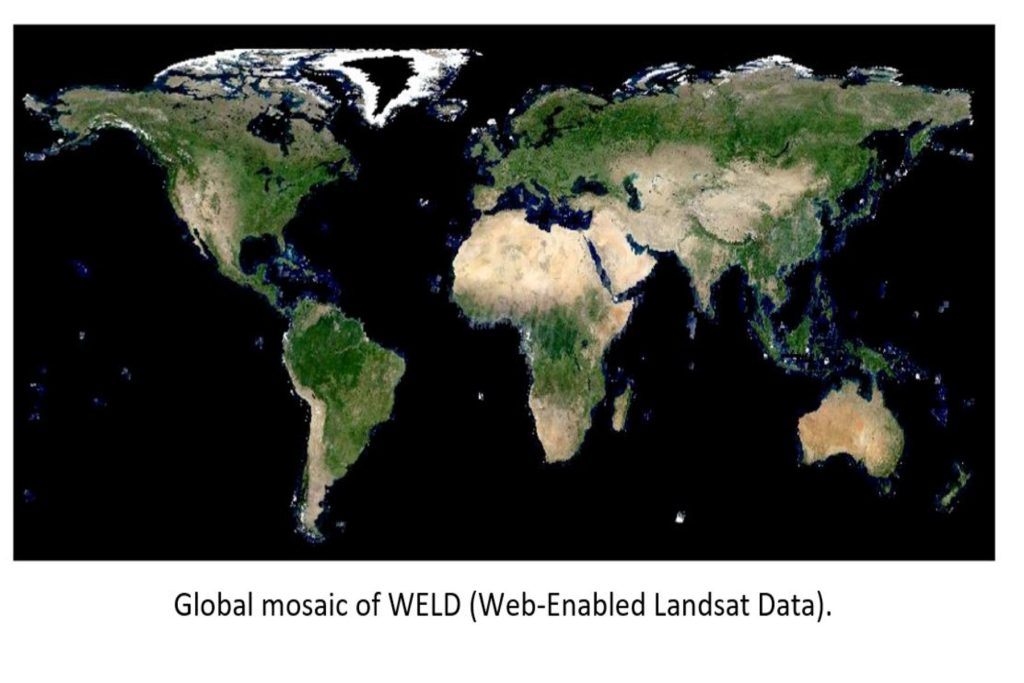

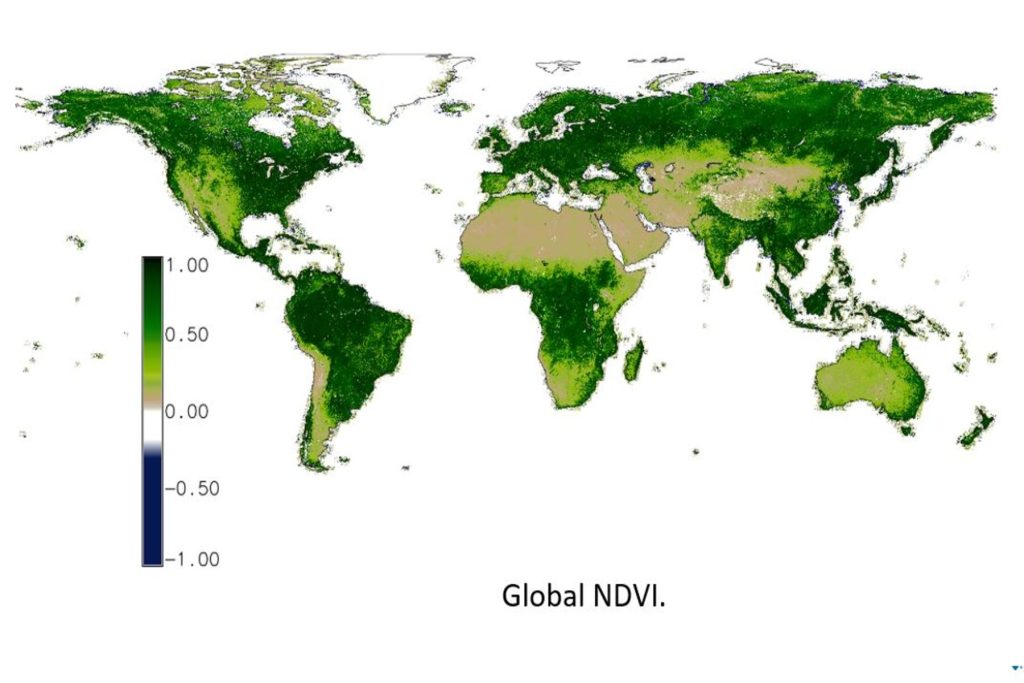

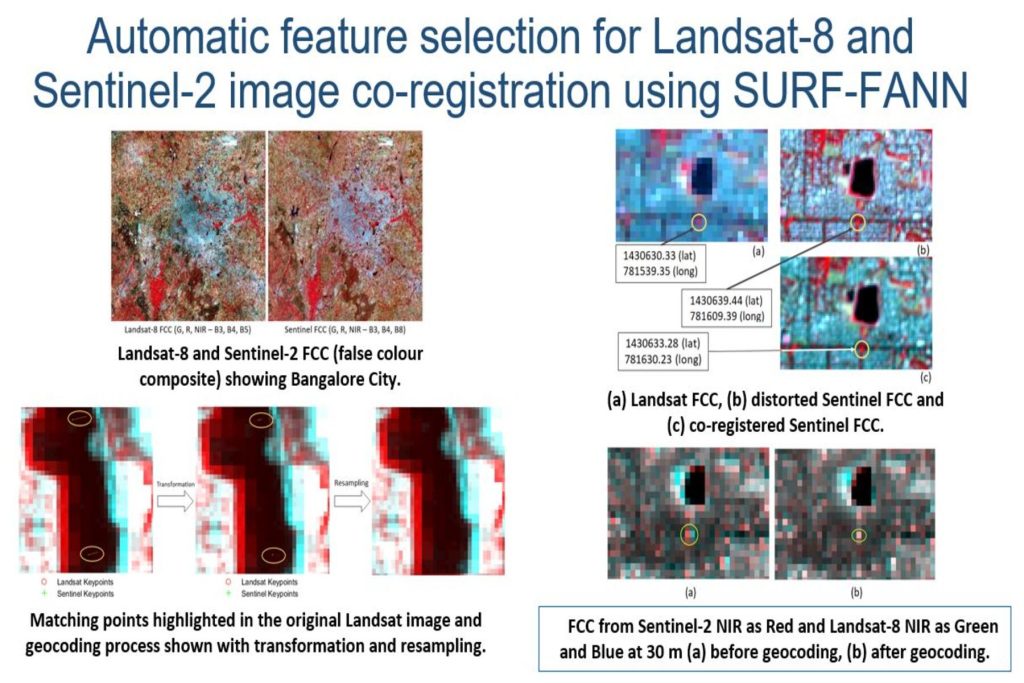

Background: The last few decades have witnessed a massive increase in Big Geodata from space-borne and airborne sensors. The goal is to provide large-scale, homogeneous information processing from these ever increasing multi-modal data sources that require automated and intelligent frameworks for storage and analysis. The resurgence of machine learning and deep neural networks have revolutionised computational methods and have found wide applications in the land cover and land use classification, content-based information retrieval, region of interest detection and temporal analysis of continuously evolving remote sensing data. Due to the availability of data from many satellite missions in public domain, certain multi-modal approaches such as multi-sensor data fusion or pan-sharpening and cross-modal information retrieval are of extreme importance. Earth observation tasks also entail the necessity for various location-based services, online mapping services, surveillance, crop-monitoring, soil-moisture tracking, change detection applications, etc. Our specific topics of research interests in Computational Intelligence in Remote Sensing Data/Image Analysis include:

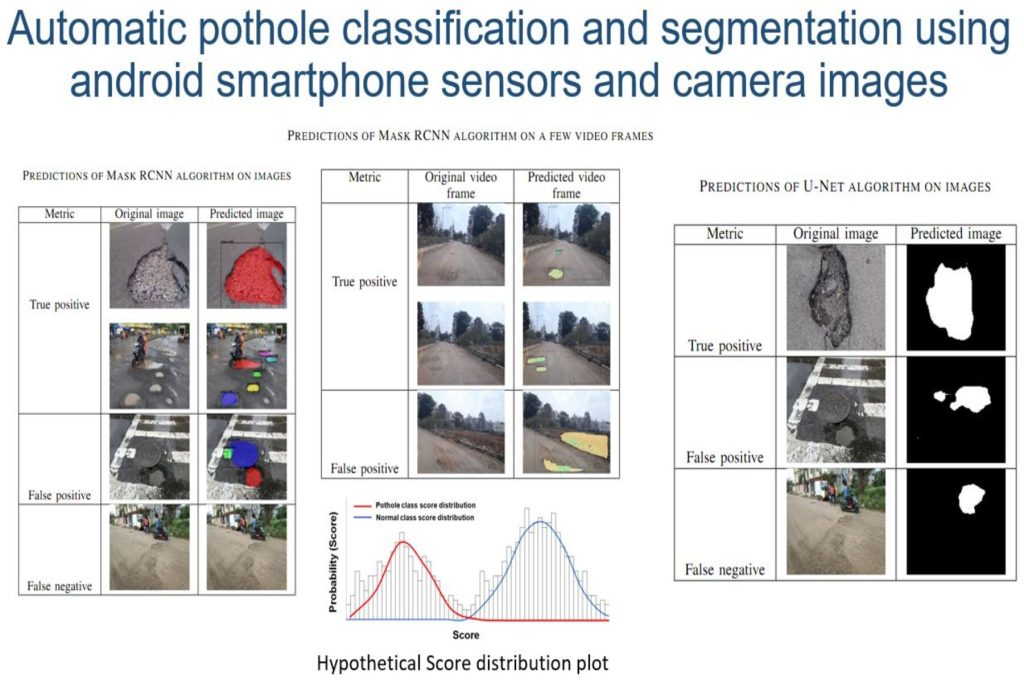

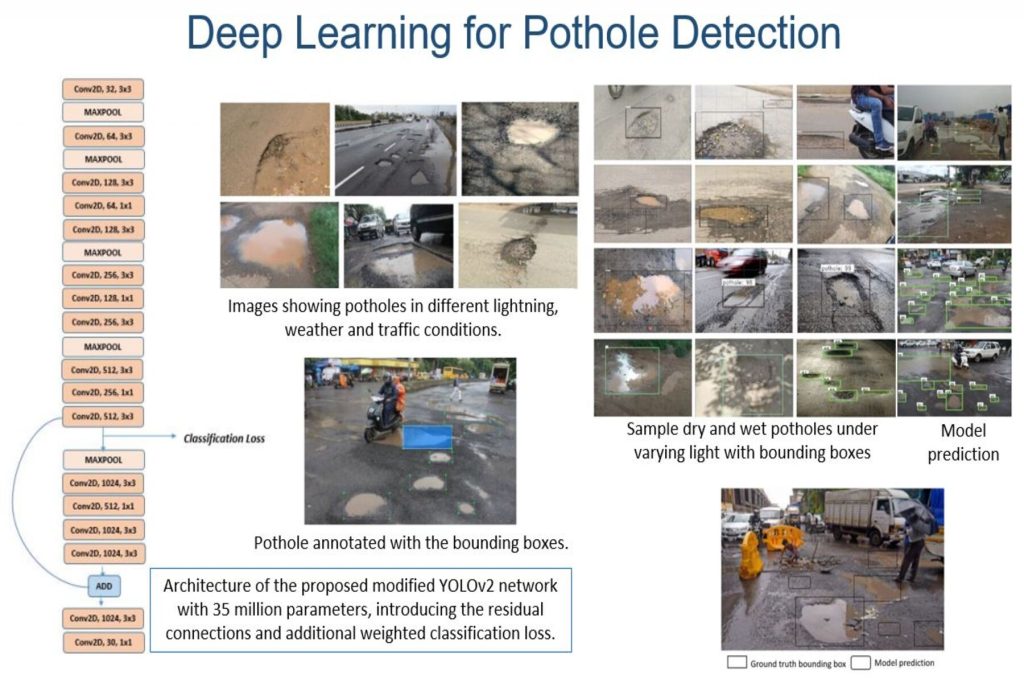

Machine learning and Deep learning in Geospatial Science

Image understanding and synthesis

Image classification using Supervised and Unsupervised learning/Clustering

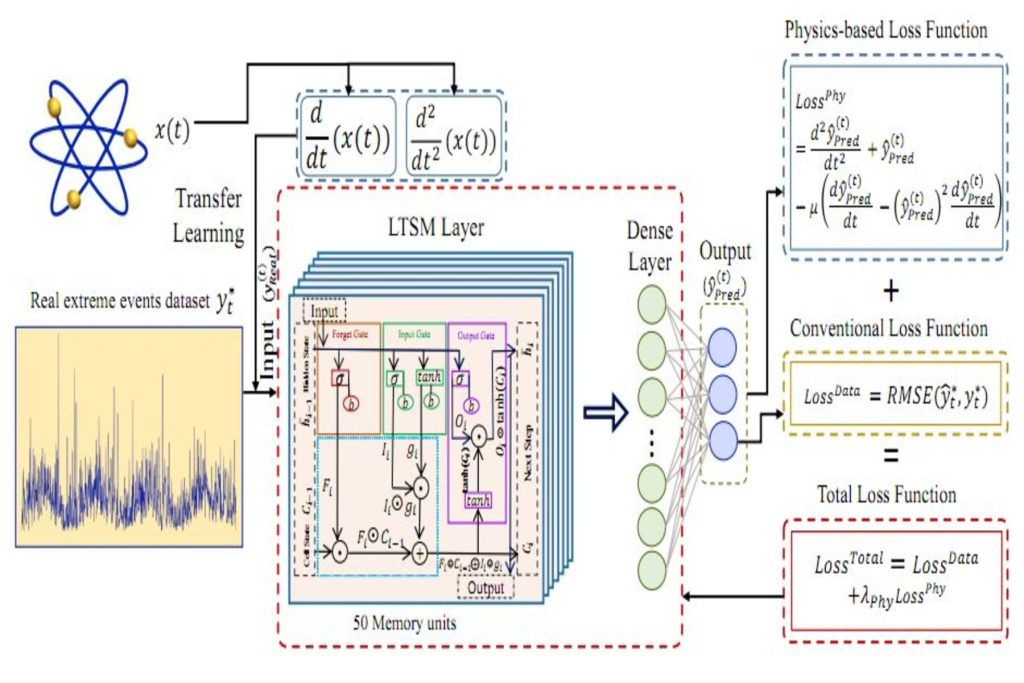

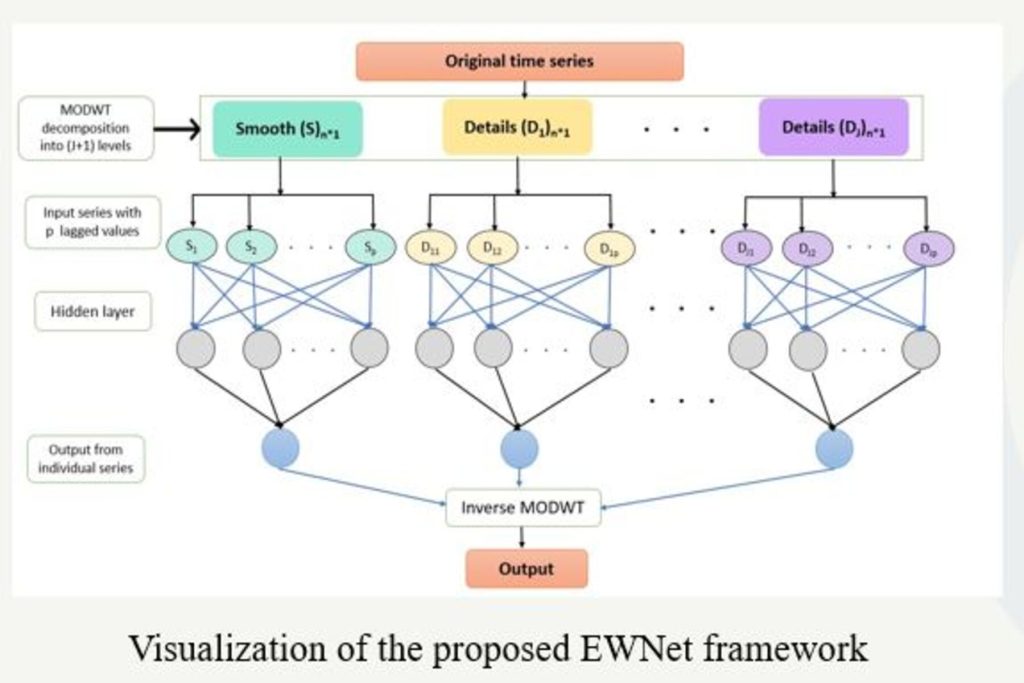

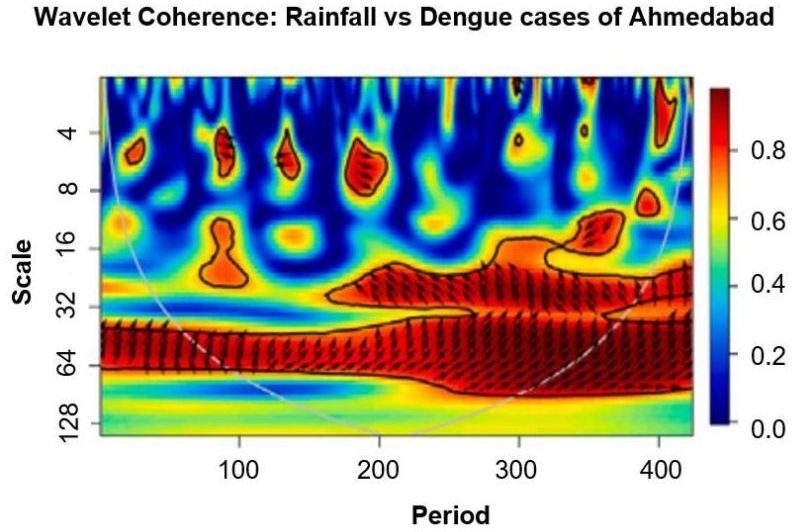

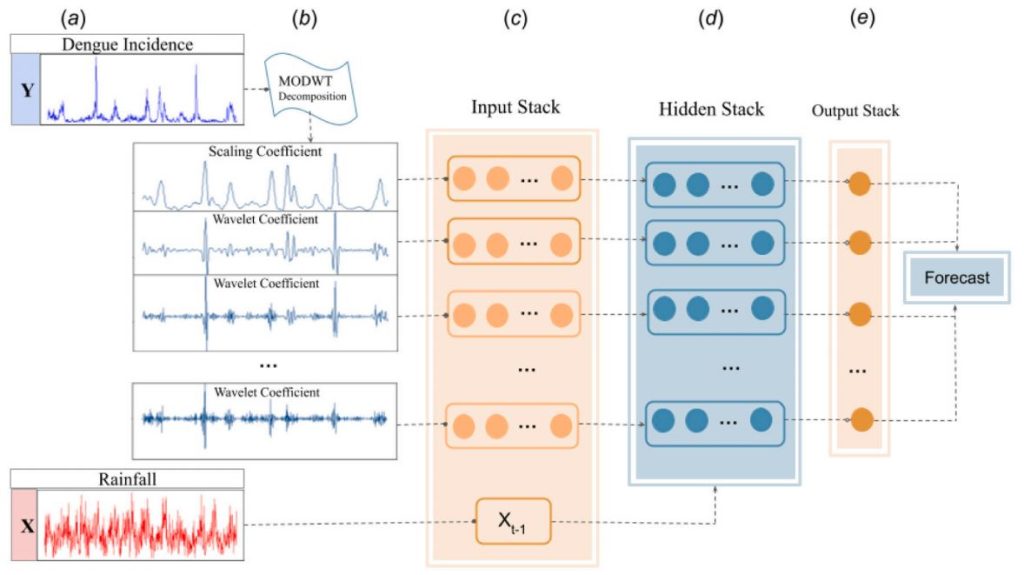

Time-series forecasting models

Spatio-temporal models

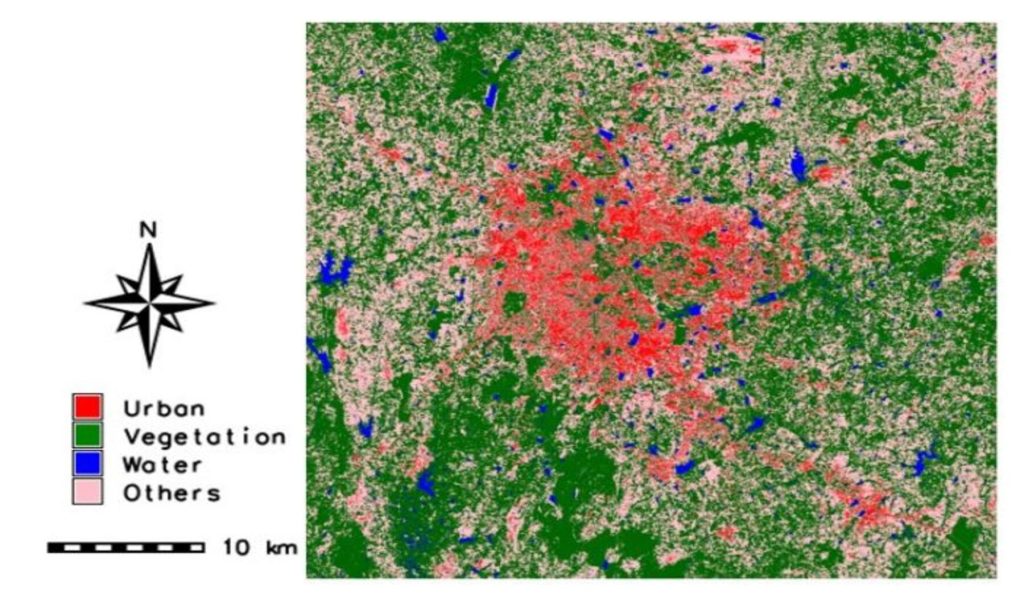

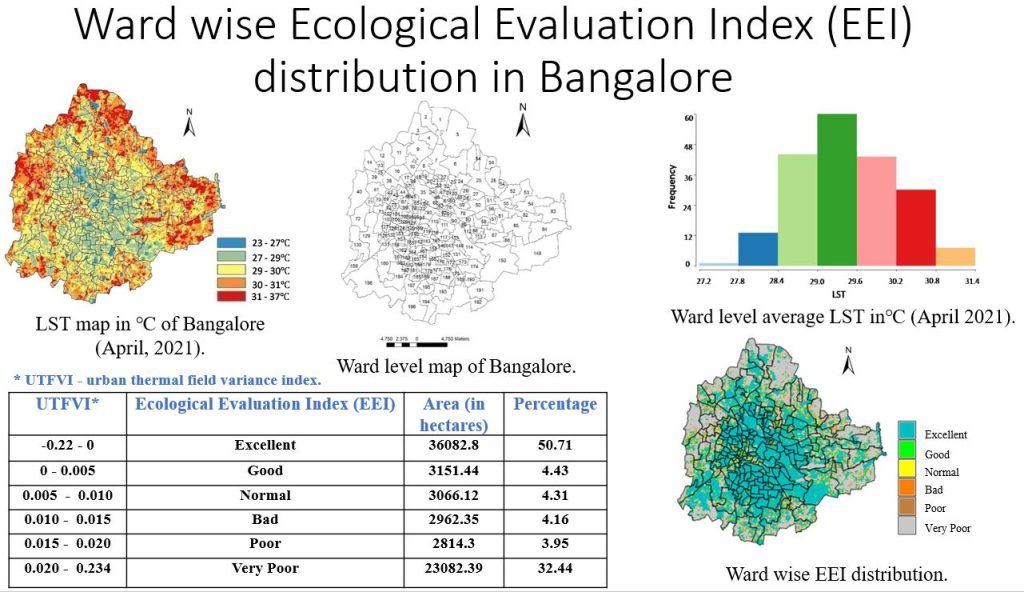

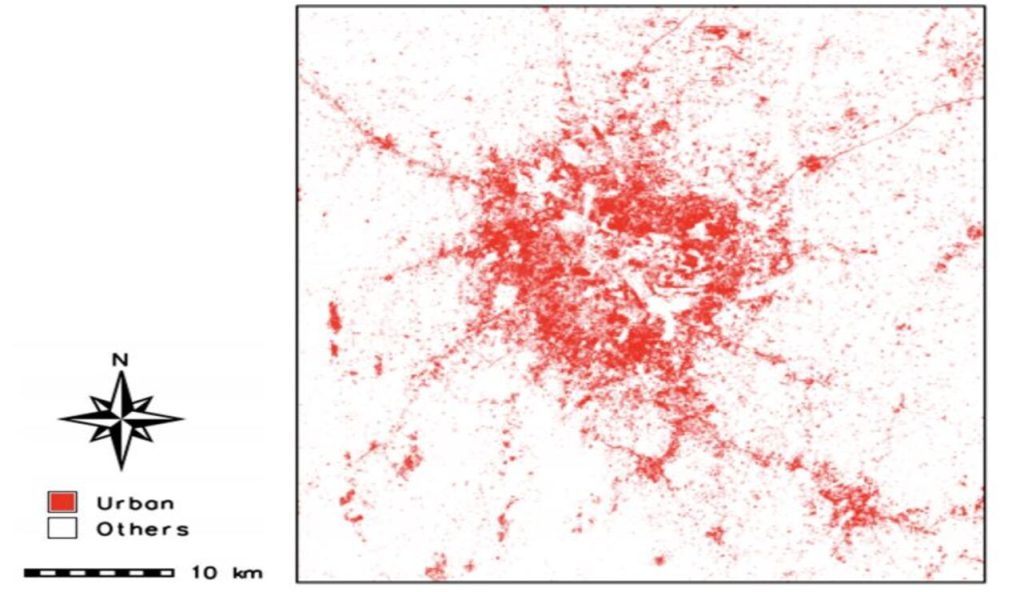

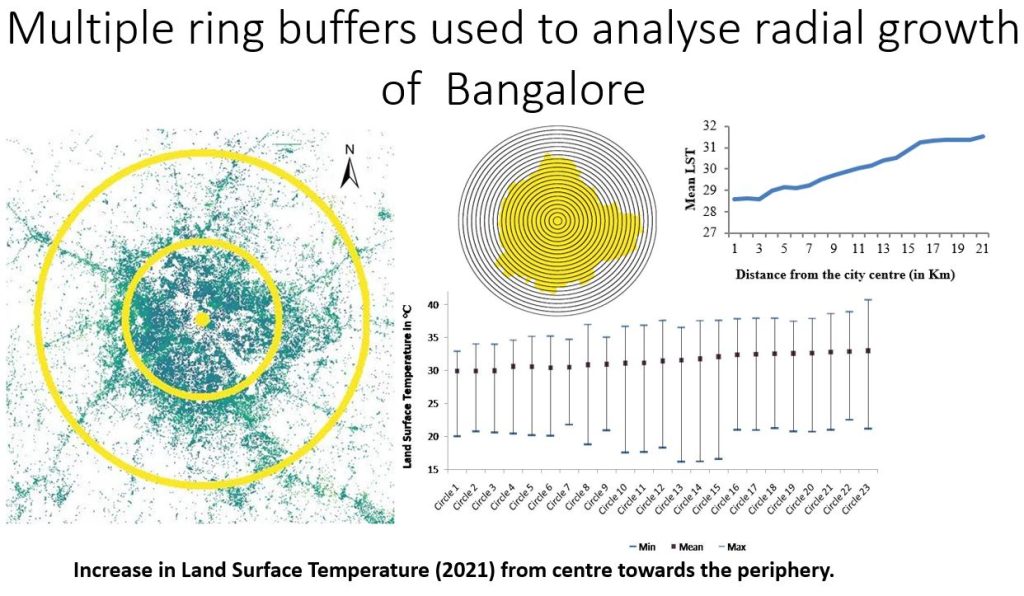

Urban growth modelling

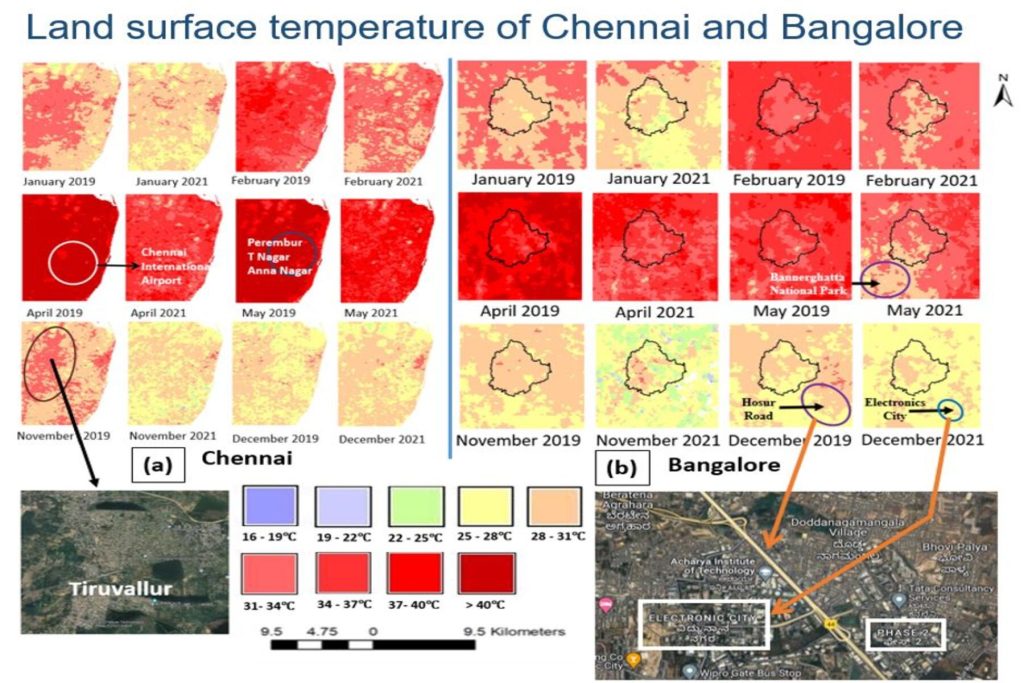

Urban climate

Digital agriculture

SAR data analysis

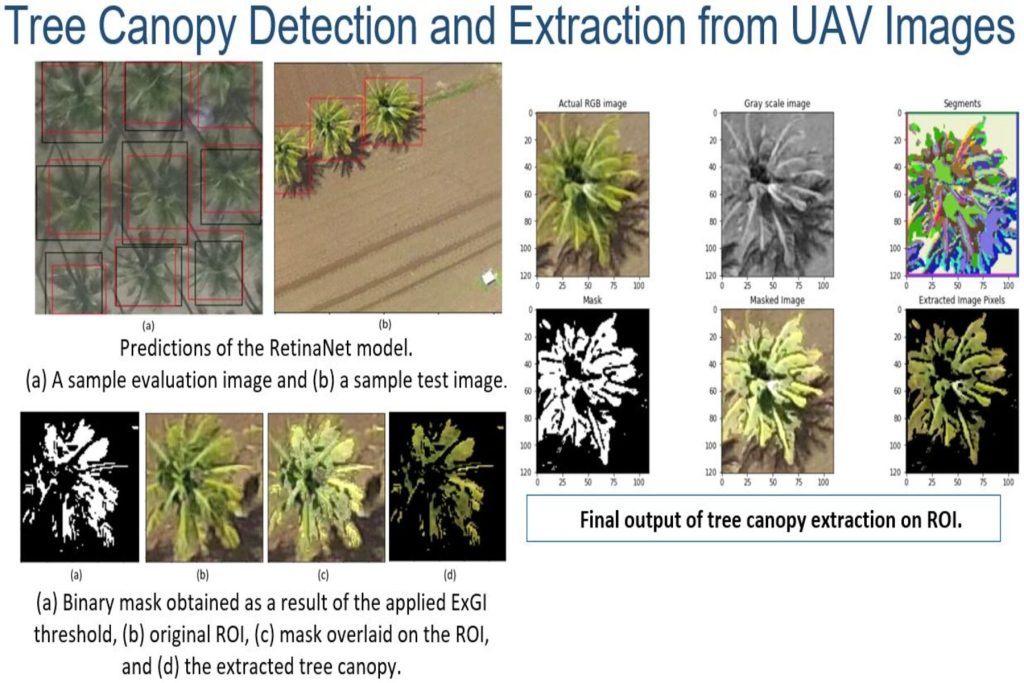

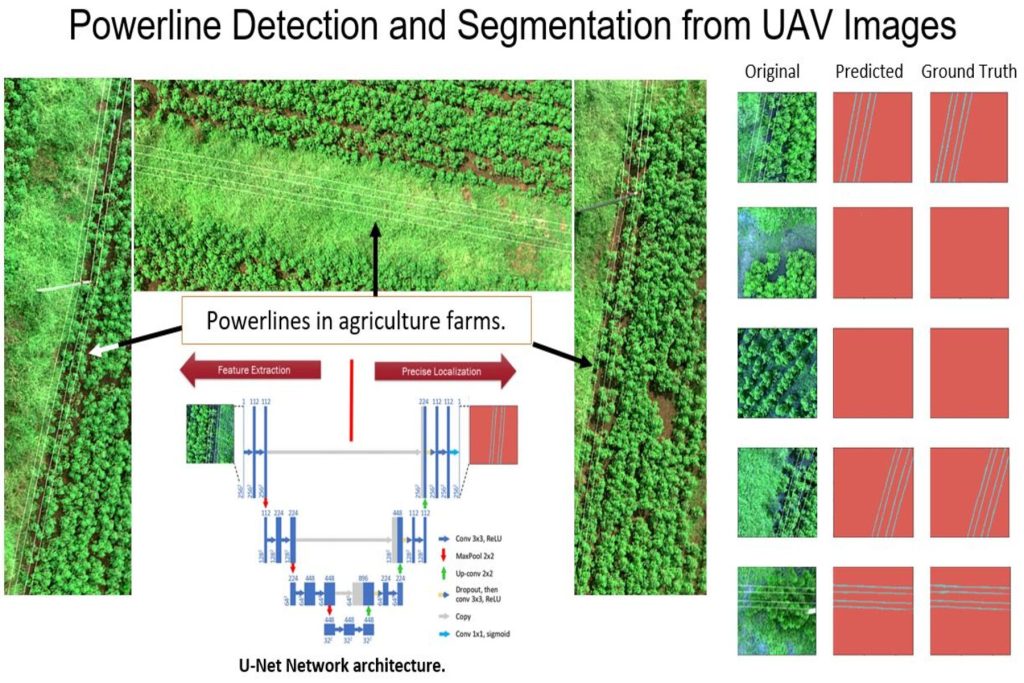

UAV data analysis

Multi-modal Data Fusion

Target detection and Segmentation

Spectral and Spatial methods

Spectral unmixing

Noise recognition and filtering

Change detection

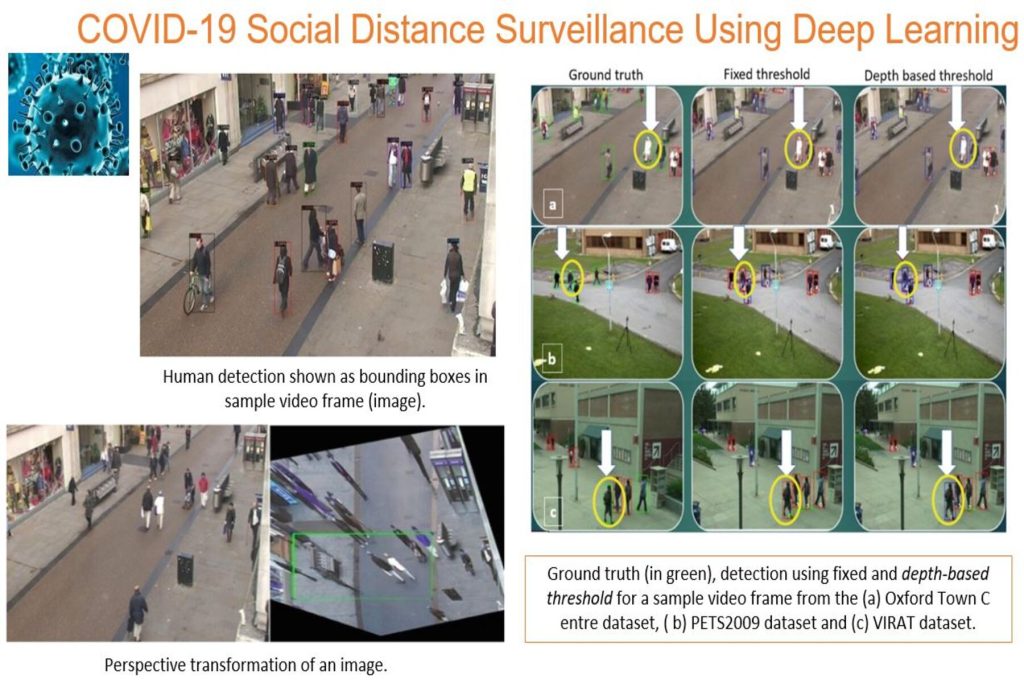

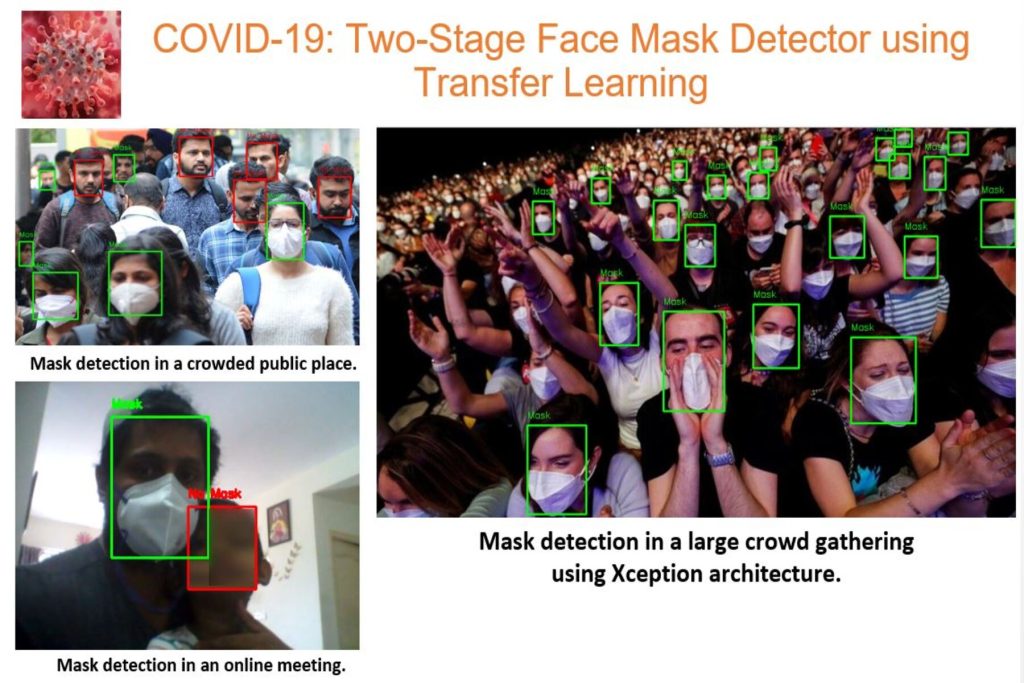

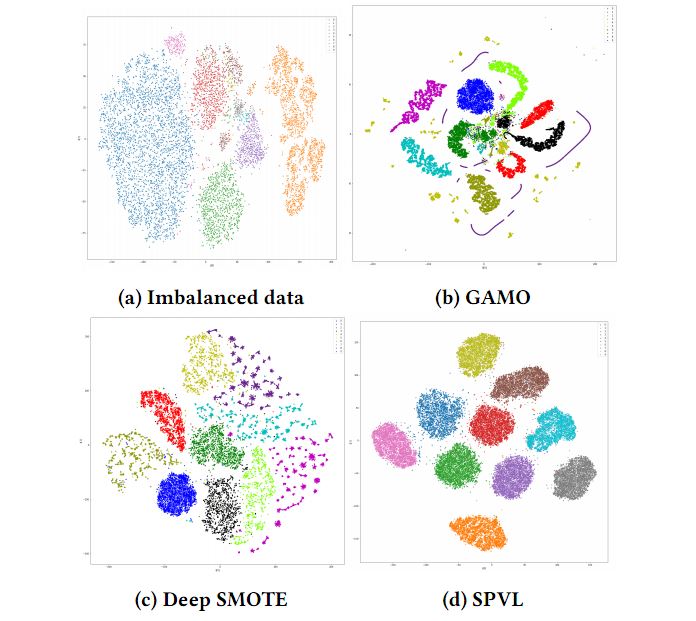

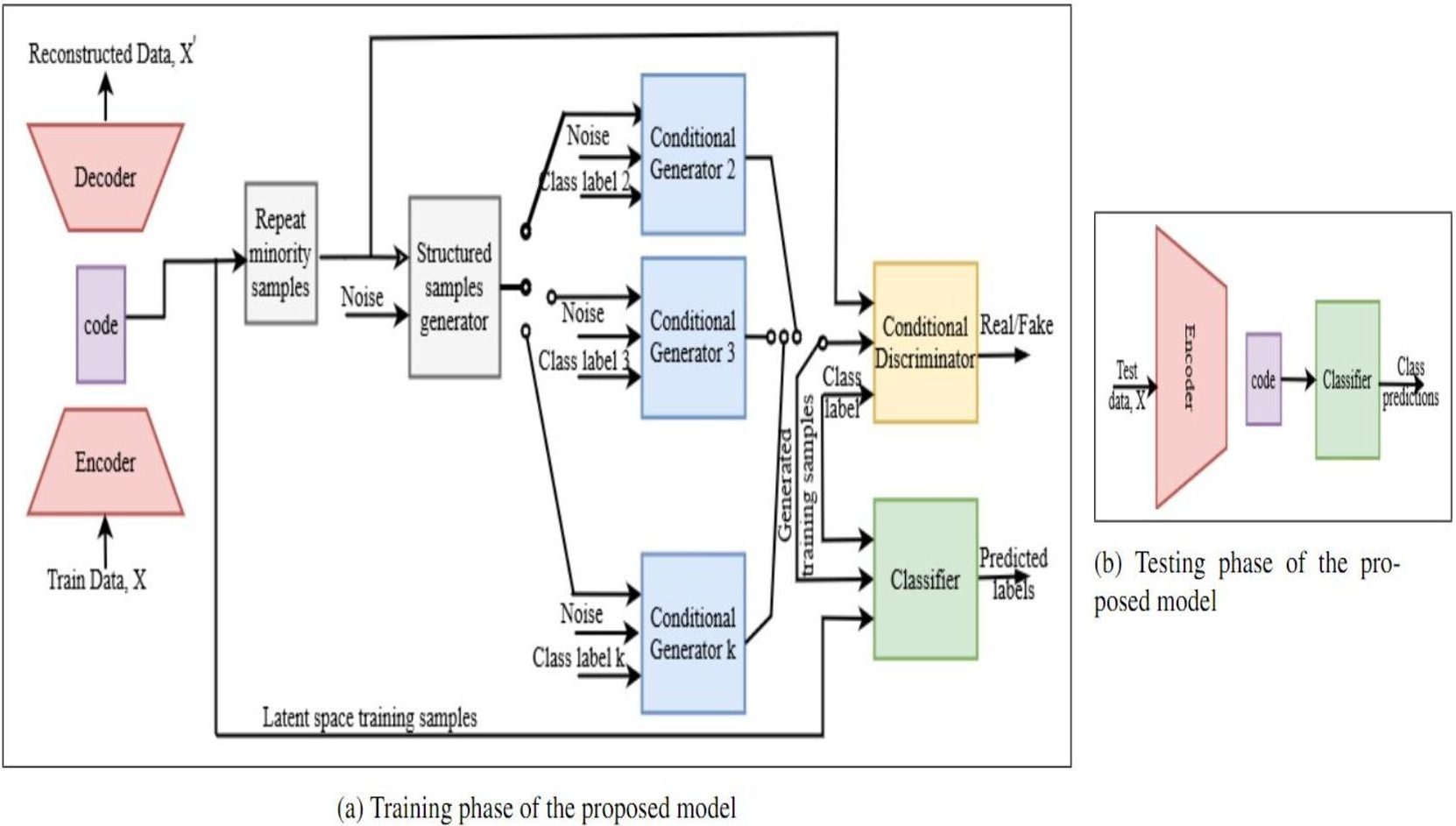

Please see below our recent research snippets.

(Click to enlarge)

We are located at IIIT Bangalore in Electronics City in the Silicon Valley of India. We are also collaborating with Industries, Research Institutions, Government Agencies / Organisations as well as Universities for applied and cutting edge research.

Partners & Sponsors

IIIT Bangalore,

IIIT Bangalore,

26/C,

Opposite Infosys,

Electronics City Phase 1,

Hosur Road,

Bangalore – 560100. India.

Bangalore

Bengaluru, IN

1:07 AM,

May 30, 2026

broken clouds

87 %

35 Km/h

Clouds:

75%

Sunrise:

5:52 AM

Sunset:

6:42 PM

Last updated: 1:07 AM